Transport Layer – Quality of Service (QoS) in Networking and Congestion Control(Traffic Management)

Spoiler : This post is long yet interesting!!

In the previous post we have seen some of the important functionalities and the working of the basic terms associated with the Transport Layer.

Now we will start exploring the two important protocols which have been implemented in this layer. One of them is connection-oriented and the other one supports the connectionless service.

TCP (Transmission control protocol ) is a connection-oriented protocol and UDP (user datagram protocol) is the connectionless protocol. Before this, we should first understand the concept of Quality of Service ( QoS ), which is indeed an important aspect of any networking device.

What is Quality Of Service (QoS) ?

Quality of Service and congestion control are the two interrelated topics. That basically means , if we able to improve any one of them then it automatically improves the other one.

In simple words, if we try to avoid or hinder the congestion, we are indirectly helping in improving the quality of service.

The congestion issue (or the quality of service) term is not only present in one single layer, but also present in other layers. QoS and congestion is a major factor in total three layers namely :

- Data link layer

- Network Layer

- Transport Layer

So instead of explaining this same topic three times, we will understand this in detail in the current post. For both the topics , we should first understand the traffic parameters:

Average data rate : The average data rate is nothing but the number of bits sent during a period of time, divided by the number of seconds in that period i.e. avg data rate = (Amount of data)/(time)

Peak data rate : The peak data rate defines the maximum data rate of the traffic. It indicates the peak bandwidth that the network requires for the traffic to pass through without changing its data flow.

Maximum burst size : The maximum burst size normally refers to the maximum length of time the traffic is generated at the peak rate.

Effective bandwidth : The effective bandwidth is the bandwidth that the network needs to allocate for the flow of traffic. The effective bandwidth is basically a function of three values i.e average data rate, peak data rate, and maximum burst size.

Congestion in a network may occur if the load on the network-the number of packets sent to the network-is greater than the capacity of the network-the number of packets that a network can handle .

To understand the term Qos, first, we should go through all the important terms associated with congestion in a network. The congestion control mechanism is mainly divided into two categories i.e. Open-loop and Closed-loop congestion control. Let us see them first one by one :

Open Loop Congestion Control Mechanism :

In open-loop congestion control, remedies are applied to prevent congestion before it happens. In these mechanisms, congestion control is handled by either the source or the destination. Following are some of its methods:

Retransmission policy : Retransmission is sometimes unavoidable. If the sender feels that a sent packet is lost or damaged, the packet needs to be retransmitted. Retransmission in general may even increase congestion in the network.

Acknowledgement policy : The acknowledgment policy imposed by the receiver may also affect congestion. If the receiver doesn’t acknowledge every packet it receives, it may even slow down the sender and help prevent congestion.

Discarding policy : A good discarding policy by the routers may prevent congestion and at the same time may not harm the integrity of the transmission.

Admission policy : An admission policy, which is a quality-of-service mechanism, can also prevent congestion in virtual-circuit networks. A router can deny establishing a virtual circuit connection if there is congestion in the network or if there is any possibility of future congestion.

Closed-loop congestion control policy

Closed-loop congestion control mechanisms try to reduce congestion after it happens.Some of its methods are :

Backpressure : The technique of backpressure refers to a congestion control mechanism in which a congested node stops receiving data from the immediate upstream node or nodes. This may cause the upstream node or nodes to become congested, and they, in turn, reject data from their upstream nodes or nodes.

Implicit Signalling : In implicit signaling, there is no communication between the congested node or nodes and the source. The source guesses that there is congestion somewhere in the network from other symptoms.

Explicit Signalling : The node that experiences congestion can explicitly send a signal to the source or destination. In the explicit signaling method, the signal is also included in the packets that carry data.

Now , being better equipped with the basic understanding of congestion and its remedies, we are now good to proceed further to understand the term QoS (quality of service) in detail. QoS is nothing but a quality that every flow seeks to attain (smooth movement without any congestion).

For achieving the quality of service, it is indeed imperative to maintain(control) the traffic at different nodes in the network. Thus various different traffic management techniques have been deployed to do so. Some of the factors which can eventually help us to understand this topic with more clarity are :

1. Connection Establishment Delay

Source-to-destination delay is a flow characteristic. Again applications can tolerate delay in different degrees. In this case, telephony, audio conferencing, video conferencing, and remote login basically need a minimum delay, while delay in file transfer or e-mail is less important.

The time difference mainly between the instant at which a transport connection is requested and the instant at which it is confirmed is called connection establishment delay. The shorter the delay the better the quality of service (qos).

2. Connection Establishment Failure Probability

It is the probability that a connection is not established even after the maximum connection establishment delay. This can be due to network congestion, lack of table space, or some other problems.

3. Throughput

It mainly measures the number of bytes of user data transferred per second, measured over some time interval. It is measured separately for each direction.

Different applications need different bandwidths. In video conferencing, we need to send millions of bits per second to refresh a color screen while the total number of bits in an e-mail may not reach even a million.

4. Transit Delay

It is the time between a message being sent by the transport user on the source machine and its . being received by the transport user in the destination machine.

5. Residual Error Ratio

It measures the number of lost or distorted messages as a fraction of the total messages sent. Ideally, the value of this ratio should be zero and practically it should be as small as possible.

6. Priority

This parameter provides a way for the user to show that some of its connections are more important (have higher priority) than the other ones.

This is important while handling congestion. Because the higher priority connections should get service before the low priority connections.

8. Resilience

Due to internal problems or congestion, the transport layer spontaneously terminates a connection. The resilience parameter gives the probability of such a termination .

9. Reliability

Lack of reliability means losing a packet or acknowledgment(being sent on its successful reach to destination), which entails retransmission.

However, the sensitivity of any application programs to reliability is not the same. for e.g file transfer and email service require reliable service unlike telephone or audio conferencing.

10. Jitter

Jitter is the variation in delay for packets associated with the same flow. For applications such as audio and video applications, it does not matter if the packets arrive with a short or long delay as long as the delay is the same for all packets.

High jitter means the difference between delays(of packets of data) is large, low jitter means the variation is small.

Now we should understand some of the factors that can play a crucial role in nullifying the effect of congestion at any node of a networking device, and thus improving the QoS. The most common methods are :

- FIFO queuing

- Priority queuing

- Weighted fair queuing

- Traffic shaping

- Resource Reservation

- Admission Control

Note : In all these methods our main aim should be to regulate the bursty traffic(large traffic arriving at a very small interval) into regulated traffic(and thereby reducing the congestion)

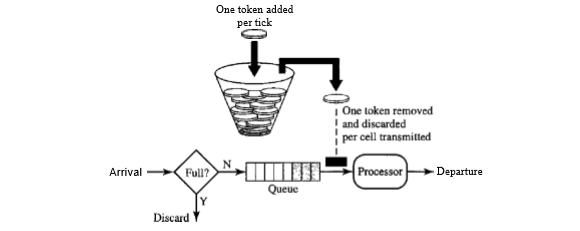

1. FIFO Queuing in QoS

In first-in, first-out (FIFO) queuing method, packets wait in a buffer ( or queue) until the node (switching device eg router or switch) is ready to process them. If the average arrival rate is higher than the average processing rate, the queue will fill up and new packets will be discarded.

Only one buffer is required for this . A FIFO queue is familiar to those who have had to wait for metro at a metro station.

2. Priority Queuing QoS

In this queuing method, packets are first assigned to a priority level. Each priority level has its own queue. The packets are in the highest-priority queue are processed first.

Packets in the lowest-priority queue are processed last. The system doesn’t stop serving a queue until it is empty. A priority queue can generally provide better QoS than the FIFO queue because higher the priority traffic such as multimedia, can reach the destination with less delay.

However, there is a potential drawback. If there is a continuous flow from high-priority queue, the packets in the lower-priority queues will never get a chance to be processed. Threshold is used and then according to the priority, packets are accepted.

3. Weighted Fair Queuing in QoS

A better scheduling method is weighted fair queuing. In this technique, the packets are still assigned to the different classes and admitted to different queues. For providing better QoS, for high priority packets high weightage is given. The queues, are weighted based on the priority of the queues, higher the priority means a higher weight.

The system mainly processes packets in each queue in a round-robin fashion with the number of packets selected from each queue based on the corresponding weight. If any system doesn’t impose priority on the classes, all weights can be equal . In this way, we can have fair queuing with priority.

4. Traffic Shaping in QoS

Traffic shaping is a mechanism to control the amount and the rate (flow) of the traffic sent to the network. Two techniques deployed for this are : leaky bucket and token bucket algorithms (traffic management algorithms).

4.1 Leaky Bucket (Leaky Bucket Algorithm)

This is implemented using a buffer at the interface level. If a bucket has a small hole at the bottom, the water leaks from the bucket at a constant rate as long as there is water in the bucket.

The rate at which the water leaks does not depend on the rate at which the water is input to the bucket unless the bucket is empty.Buffer stores the data and forwards it in regular ‘T’ intervals (T is based upon network capacity).

The input rate can vary to an extent , but the output rate remains constant. Similarly, in networking, a technique called leaky bucket can smooth out bulk traffic. bulky chunks (of traffic) are stored in the bucket and sent out at an average rate.

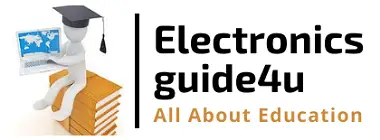

4.2 Token Bucket (Token Bucket Algorithm)

The leaky bucket is a very restrictive and rigid algorithm . For example, if a host is not sending for a while, its bucket becomes empty. Now if the host has bursty data (large data coming at a time in the small interval), the leaky bucket allows only an average rate. We can say that the time when the host was idle is not taken into account.

On the other hand, the token bucket algorithm generally allows the idle hosts to accumulate credit for the future in the form of tokens. For instance, the system sends ‘n’ tokens to the bucket. The system removes one token for every cell (or byte) of data being sent. Thus in this packet discarding probability and data delay is less.

5. Resource Reservation in QoS

A flow of data basically needs resources such as a buffer, bandwidth, CPU time, and so on to maintain a steady flow. The quality of service (QoS) is improved if these resources can be reserved beforehand.

6. Admission Control in QoS

It is the mechanism used by a router, or a switch(or any networking device), to accept or reject a flow based on predefined parameters called flow specifications.

Before a router accepts any flow for processing, it checks the flow specifications to check if its capacity (in terms of bandwidth, buffer size, CPU speed, etc.) and its previous commitments to other flows can handle the new flow (thus implements an admission control for the flow of traffic).

Note: Congestion control is for switching networks and flow control is for any computer network.

Here comes the end of this long post , hope you enjoyed and gained some valuable insights about the QoS aspect of any networking device. See you soon in my next post regarding the UDP protocol of the transport layer.

Aric is a tech enthusiast , who love to write about the tech related products and ‘How To’ blogs . IT Engineer by profession , right now working in the Automation field in a Software product company . The other hobbies includes singing , trekking and writing blogs .